Introduction

Never has the artificial intelligence scene been more polarized. On one hand, tech giants such as OpenAI and Google are placing their strongest models behind API walls and are raking in billions in revenues, having their secret sauce hidden. On the other, Meta, DeepSeek, and an increasingly large number of open-source proponents are making AI more democratic through the release of model weights, training code, and research papers under free license.

It is not merely a technical controversy—it is a philosophical battlefield as to who holds the future of artificial intelligence. Will AI be concentrated with a small number of technology monopolies, or will it be made a global resource? The solution will determine future healthcare innovations, as well as economic disparity, decades ahead.

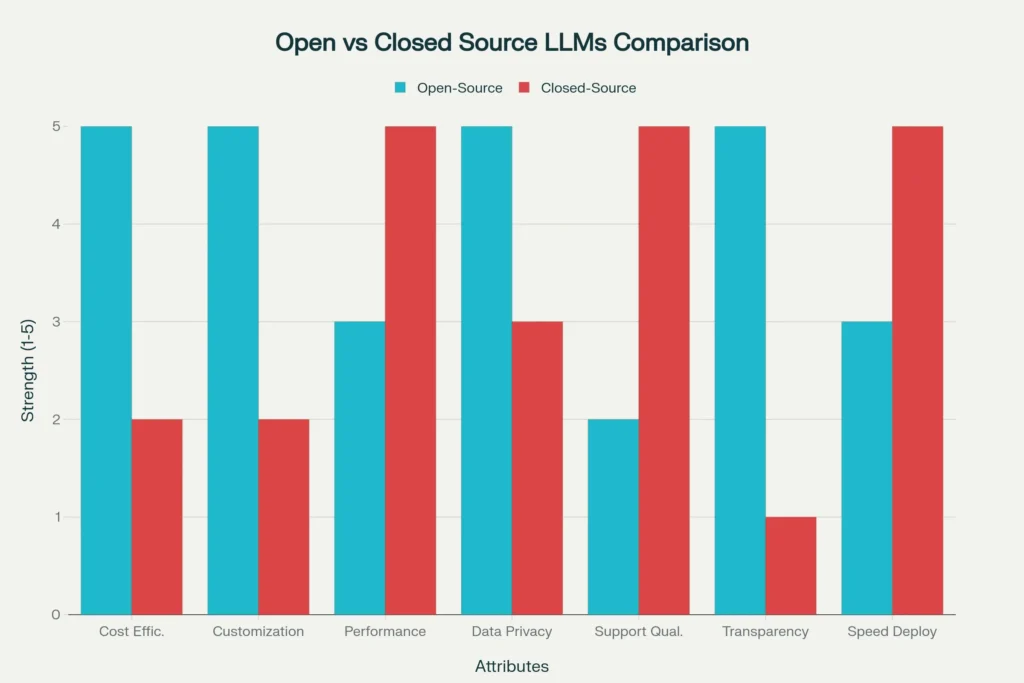

Comparison of Open-Source vs Closed-Source LLMs across key characteristics

Open-Source vs. Closed-Source LLMs : A Comprehensive Comparison

Here’s a detailed comparison of open-source and closed-source LLMs across important attributes:

The Great AI Divide: The Battlefield

Consider this as the early days of the internet. Closed-source LLMs are a walled garden like that of AOL, refined, managed, and lucrative, yet ultimately restrictive. Open-source models? They are the savage frontier of the web, disheveled and anarchic, and full of creativity and potential.

Closed-source LLMs maintain their architecture, training data, and model weights in strict corporate secrecy. They can only be accessed by APIs at a per-token fee, with the company having full control over your data and your usage trends. These are OpenAI GPT-4, Claude by Anthropic, and Gemini by Google—the giants that take up the headlines and enterprise deals.

Open-source LLMs turn this table around. They make model weights, training code, and even technical reports available to anyone to download, modify, and deploy. The Llama series by Meta, R1 by DeepSeek, or models by Mistral are a representation of this philosophy of open development and community enhancement.

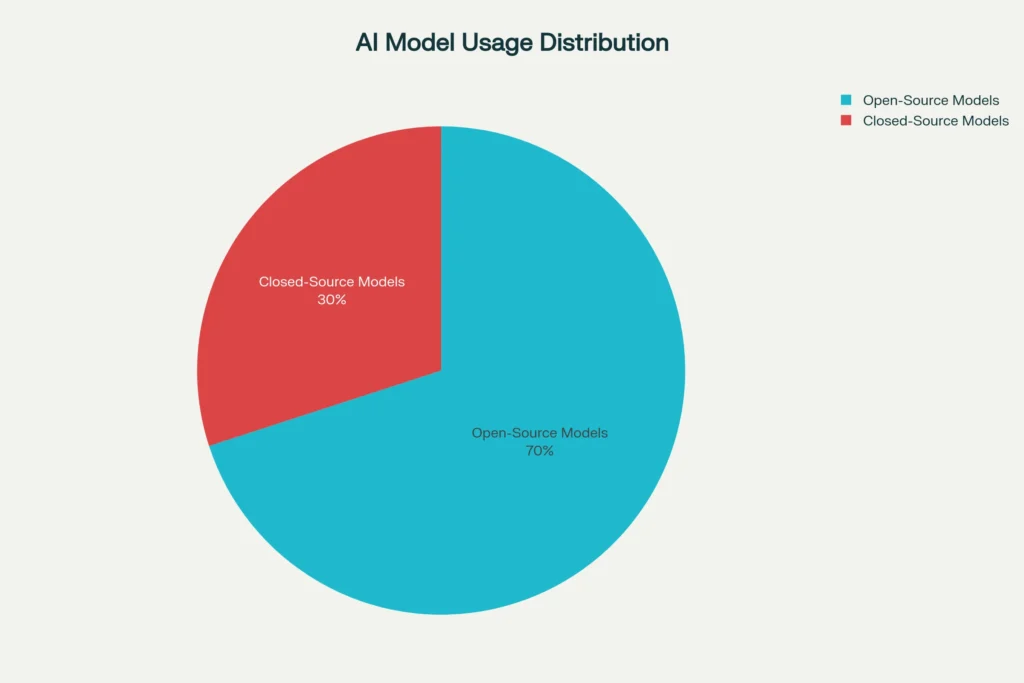

The stakes couldn’t be higher. It has been estimated by a recent industry analysis that 70 percent of commercial AI application uses will be managed by open-source models, and it is a seismic event that will displace the closed-source dominance we’ve experienced since the release of ChatGPT.

Market distribution showing 70% open-source vs 30% closed-source AI model usage in commercial applications

The Performance Wars: David vs. Goliath Story Gets Complicated

Over the years, the performance gap appeared to be impossible. The advanced thinking of GPT-4 combined with the subtle writing of Claude gave open-source options the appearance of playthings. But that story is unraveling quickly.

Breakthrough Moment in DeepSeek R1

This was changed in January 2025 with DeepSeek releasing R1, an open-source reasoning model capable of competing with OpenAI on O1 performance with 95% reduced training costs. It was not the growth of a more basic product, but a radical change that caused ripples throughout the whole AI industry.

The numbers tell the story:

Passing an AIME 2024 with 79.8% Pass @1, a fraction higher than OpenAI-o1.

97.3% on MATH-500, the same as the flagship OpenAI.

2,029 Elo rating on Codeforces, with a performance that is higher than 96.3 percent of human participants.

Meta’s Llama Evolution

The experience of Meta with Llama 1 to Llama 3 shows how open-source development speeds up innovation. Only a few months after the release of Llama 2, a thousand and more specialized versions had been developed, each aiming to improve on what had gone before. Llama 3 70B is now able to provide GPT-4 performance at GPT-3.5 prices, being up to 50x cheaper and 10x faster than proprietary solutions.

The Closing Gap

According to independent benchmarks, the performance gap is declining at a very high rate:

Llama 3: 82% on MMLU vs GPT-4 Turbo’s 86.4%

Reasoning at graduate level: Llama 3 got 35.7% vs GPT-4 39.5%

Code generation: DeepSeek R1 has results competitive to experts in programming.

Affordability and Availability: The Great Leveler

This is where open-source models are able to deliver their knockout punch. Although GPT-4 API calls may be thousands of dollars in large-scale applications, some cloud infrastructure may be enough to run Llama 3 locally.

Breaking Down the Economics

| Type of model | Initial Cost | Continuing Cost | Scalability | Control |

| Closed-Source | None upfront | $0.12/1K tokens | Limited by vendor | Minimal |

| Open-Source | Full Infrastructure investment | Compute costs only | Unlimited | Complete |

Real-World Impact

One Fortune 100 telecom company, with Llama 3 running on custom hardware, cut conversational AI total cost of ownership by 40% but this necessitated an investment in an in-house MLOps team. This math is even more compelling in the case of startups and smaller organizations.

The pricing of DeepSeek R1 explains the radical disparity:

$0.55 per million input tokens.

$2.19 per million output tokens.

This is in contrast to enterprise-grade closed models in which costs can grow rapidly with scale.

Data Privacy and Security: The Trust Equation

The sovereignty of data is no longer an option in the regulated sectors such as healthcare and finance, but rather a requirement of law. Here is where open-source models shine the most.

The Self-Hosting Advantage

In the case of open-source LLMs, no sensitive data is exited of your infrastructure. You are able to install models in air-gapped environment, meet rigid regulatory demands and have full audit trails. Even closed source API with VPC configurations one has to trust third parties with his or her most vulnerable information.

Patterns of Enterprise Adoption

When organizations view AI as important to competitive advantage, they are 40 percent more likely to make use of open-source AI models. The reasons are clear:

Full data flow control.

Fine-tuning capability on proprietary datasets.

Vendor lock-in risks are eliminated.

Open security audit features.

Speed of Innovation: Community vs. Corporate Labs

Open-source innovation is now breathtaking. When Meta announced Llama, the community enhanced and optimized the model in a few weeks, developing medicine, law, and code specific versions. This model of distributed development can move much faster in a manner that even the best funded corporation laboratories can not keep up with.

The Network Effect

Network effects are what economists refer to as an advantage of open-source development. Everyone has a benefit as a result of each improvement in a virtuous cycle of improvement. The GitHub repositories are filled with hyper-optimistic models, custom training scripts and optimization methodologies that propel the whole ecosystem.

Corporate Response

This change has been recognized by even OpenAI. Sam Altman acknowledged that they have been on the wrong side of history in their open-source strategy, and OpenAI published their first open-weight models in more than half a decade. It is an important strategic shift of a company that develops its proprietary models.

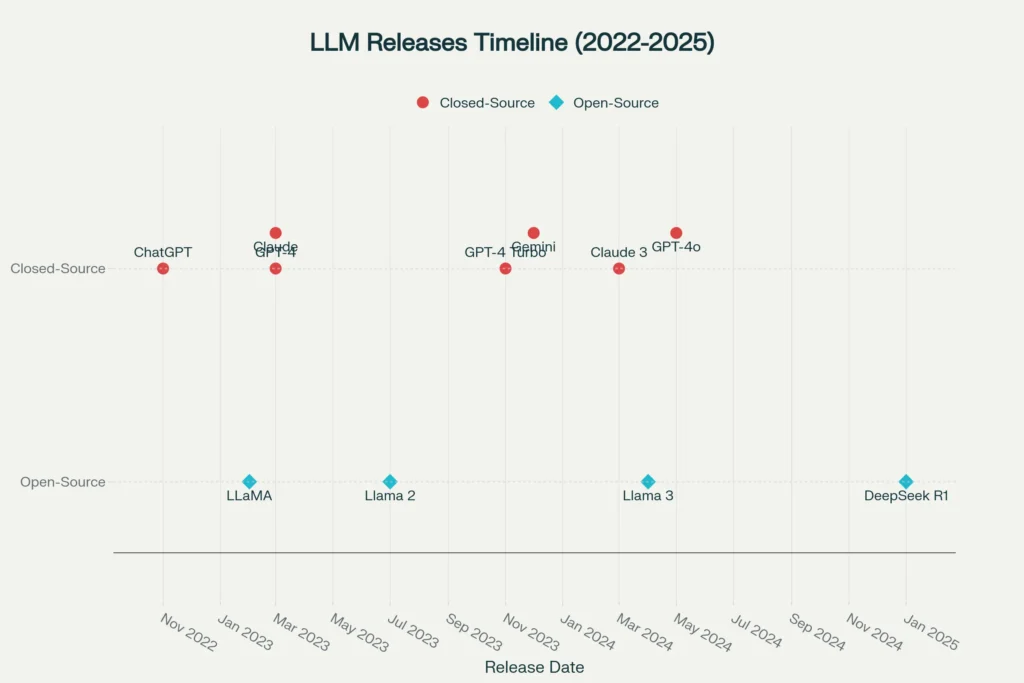

Timeline of major Open-Source and Closed-Source LLM releases from 2022-2025

The Rough-cut Competition: Tech Giants Fight to be King

Competition between OpenAI, Google, and Meta is more intense than ever, as each of the companies has completely different approaches.

OpenAI’s Proprietary Push

The leadership of OpenAI lies in the overall better user experience and possible integration into an enterprise, where chatbot ChatGPT has attracted hundreds of millions of users each week. Their API-first, closed-source strategy has been successful in making them valued at $300 billion.

Meta’s Open-Source Gambit

The plan of Meta is a rational speculation on the closed-source orthodoxy. They are making Llama open-source to be the antithesis of the closed strategies of openAI and Google. Mark Zuckerberg said that open source leads to more innovation as it allows many more people to develop with new technology.

Google’s Hybrid Approach

Google uses its large data sets and computing infrastructure and selectively opensources software such as Gemma models. Their inclusion in the Search, Android, and Workspace offer enormous distribution benefits.

The Talent War

The competition over AI talent is now intense, and Meta is already paying over the 2 million mark in bids to secure talent and lose it to other companies. It is another front in the larger AI war, which entails this struggle of human capital.

Striking a Balance between Innovation, Safety and Accessibility

The open vs. closed question is associated with some of the most important questions regarding the safety and accessibility of AI. The proponents of closed-source believe that having strong models under API walls is safer and prevents misuse. Proponents of open-source respond that openness and decentralization would result in stronger, more reliable systems.

Safety Considerations

Each of the approaches has its own difficulties:

Closed-source: It is not transparent thus bias detection and safety auditing is hard.

Open-source: Reduce the obstacles to potentially malicious software but allow an end-user community to make the software safer.

The Regulatory Landscape

Governments around the globe are struggling to understand how to control the development of AI, and still encourage innovation. The AI Act of the European Union is risk-based, and the United States preferences are industry-specific regulations. These open vs. closed source dynamic will be greatly influenced by these structures.

Use Cases and Applications: These Are the Strengths of Each Approach

Various applications prefer various methods on the basis of certain needs and limitations.

Open-Source Advantages

Bespoke enterprise software that needs extensive integration with proprietary software.

Where transparency and reproducibility are crucial, research and development.

Controlled sectors that have the highest data sovereignty demands.

Critical areas that can be fine-tuned using proprietary data.

Closed-Source Strengths

Quick prototyping and feasibility development.

Applications with very little customization.

Business solutions in which support by the vendor is vital.

Products that require polish and reliability that face the consumer.

The Future of the Battle of AI: What Are the Stakes?

This is not only technology, but power, democracy and economic opportunity in the age of AI. It remains to be seen whether artificial intelligence will be democratizing, and empower individuals and smaller organizations; or it will be in the hands of a few tech titans.

Economic Implications

The presence of open-source AI would even the playing field between startups and developing nations and academic institutions that cannot now afford access to the advanced AI features. In contrast, closed-source models offer sustainable business models and that finance further research and development.

Geopolitical Dimensions

The use of open-source by Chinese companies that have successfully created its own version such as DeepSeek brings geopolitical complexity to this discussion. The issue of open versus closed source is becoming a question of strategy as AI capabilities are becoming national competitive advantages.

The Innovation Paradox

Ironically, the development pace of open-source can be improving more rapidly than closed-source research due to the very collaborative nature of open-source. The case study of the community-led advances to Llama models show that distributed innovation may be faster than the centralized research and development.

Future Perspectives: The Future of AI Development

These indications are pointing to a hybrid future whereby the open and closed-source models do exist but are used in various market demands. The major trends, which will determine this future, are:

Crossover of Capabilities

The performance difference between open and closed models is steadily decreasing and it is more a question of other things other than raw capability.

Specialized Applications

The various applications will prefer different methods depending on the need to customize, privacy, cost, support.

Regulatory Evolution

The open vs. closed balance will see more and more elements affected by government policies, maybe even requiring transparency in some applications whilst protecting proprietary research.

Community Maturation

The community of open-source AI developers are coming up with superior governance structures, safety measures, and quality assurances that take into account traditional issues regarding community-created software.

The Decision: Both the options are not usually superior

The open-source vs. closed-source battle between LLMs is not necessarily going to have a winner- and that is likely a good thing. The two methods have their own benefits that cater to the various needs of our diverse world of technology.

Choose Open-Source When:

There is no compromise on data sovereignty.

It is necessary to customize and fine-tune.

The main point is cost optimization.

Auditability and transparency are needed.

You possess expertise in technical deployment.

Choose Closed-Source When:

Speedy deployment is essential.

Enterprise level support is necessary.

Advanced performance is worth much more.

Your team does not have expert knowledge of AI infrastructure.

The vendor takes care of regulatory compliance.

The future will probably be those organisations that position closed-source models to create rapid prototyping and general-purpose models and open-source solutions where specialised and privacy-sensitive or cost-sensitive use cases are required.

The open-source community is not merely following behind—as the breakthrough of DeepSeek R1 shows, it is leading the pack in terms of innovation and efficiency. Neither party will win the fight to control the future of AI but the healthy competition between them that leads to constant improvement.

The real winners? The developers, businesses, and eventually, users who gain through this competitive innovation that leads to improved, cheaper and more accessible AI tools to all.

FAQs

1. Are open-source LLMs realistic to use in small enterprises without specialized workers in the field of AI?

Yes, but with caveats. Managed hosting of open-source models It is now possible to use cloud platforms such as Hugging Face, Replicate, and RunPod to host most complex things, making open-source models easier. With little to no technical skills, a small business can use Llama 3 or Mistral models with these platforms and achieve 80% of the benefits at a fraction of the cost. Nevertheless, the highly specialized knowledge is still needed to perform advanced customization and fine-tuning.

2. What is the performance of open-source LLMs in multiple languages relative to closed-source systems?

The multilingual capabilities of open-source models are frequently strong as training data and other performance-enhancing features are provided by the global community, which lacks the representation of certain languages. Meta Llama models also hold more than 100 languages, and there are also community fine-tuned versions serving particular regional dialects. Closure models usually incorporate commercially viable languages, and may be insensitive to small-scale linguistic groups.

3. What would become of my fined-tuned model when the original open-source project would be abandoned?

It is in fact, a benefit of open-source models. As you have the entire model weights and structure, you will still be in full control in case the original developers cease their support of the project. The community is allowed to fork the project, and you are free to continue the development. Service discontinuation can get you stuck with closed source APIs.

4. Are there any legal risks of using open-source LLMs as a commercial application?

Licenses Most modern open-source LLMs have permissive licenses (MIT, Apache 2.0) that do not explicitly prohibit commercial use. Nonetheless, other models such as Llama are custom licensed but with limitation on very large-scale services (700M+ users). Always ensure that the terms of a license are applicable to your use case. The larger legal threat is usually to train data copyright problems, which involve open and closed-source models.

5. What can open-source models do concerning bias and safety?

Models Open-source models are more transparent and can be corrected by the community and are less susceptible to bias detection and correction, although they might not have the high-level safety filtering of commercial APIs. Nevertheless, such transparency enables organizations to adopt their own safety practices that best meet their needs and values, instead of using restrictions that are imposed by vendors, which might not resonate with their values and requirements.