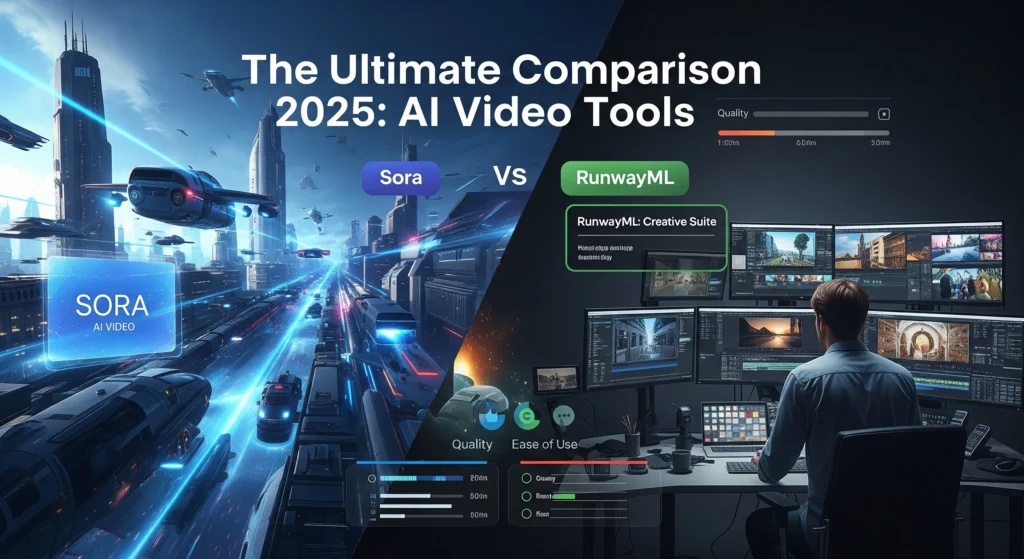

Introduction To The Future of Generative Video: Dissecting Generative Tools such as Sora and RunwayML

The online environment is undergoing an earthquake that is changing the laws of content development. We are talking about the end of the world, where you can just type a sentence and watch Hollywood-quality videos, a world where you can bring your most pleasurable creative imaginations to life in seconds, and a world where creativity and reality are no longer separate.

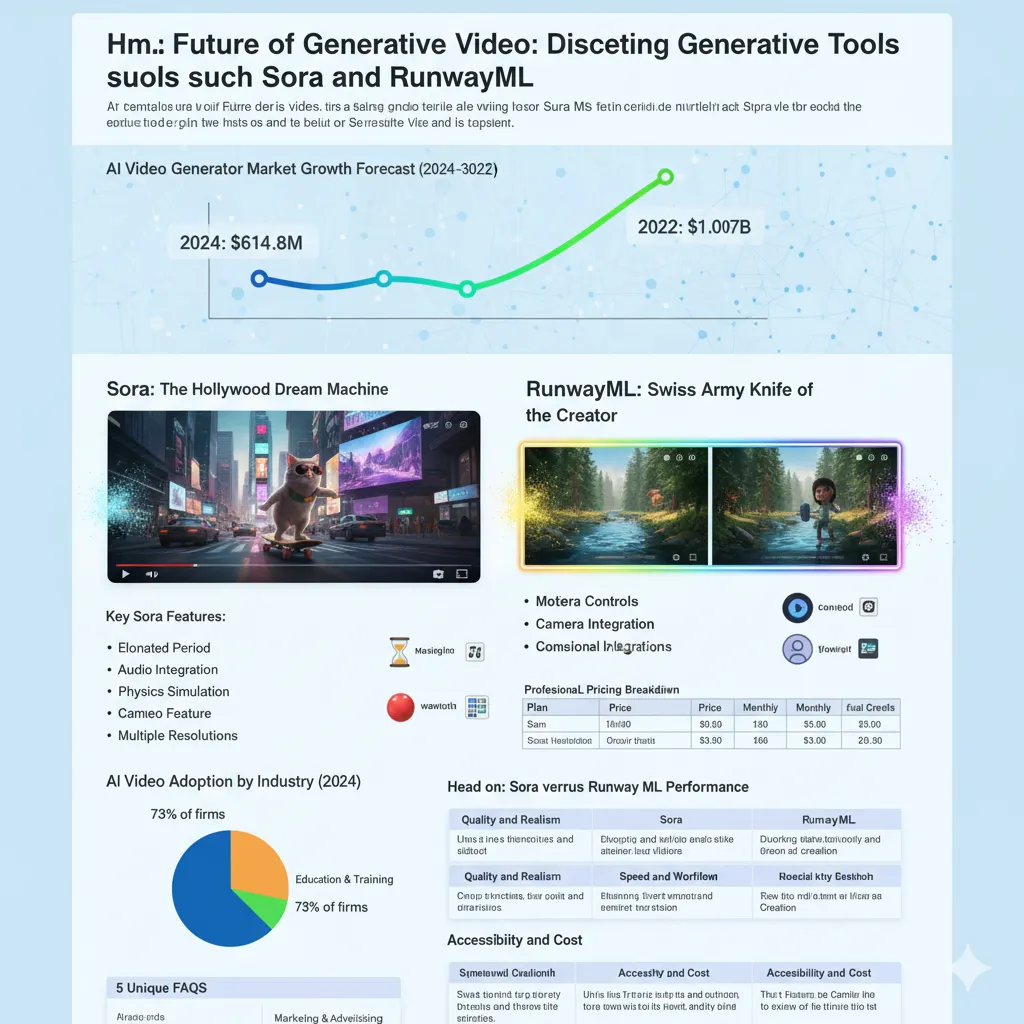

The technology of generative video is not only coming – it is going out of control. The forecast for the future AI video generator market is remarkable, growing to $614.8 million in 2024 and an incredible $14.07 billion by 2032. This is not just growth; it’s a revolution in progress. Here are two giants leading the charge: OpenAI Sora and RunwayML.

AI Video Generator Market Growth Forecast (2024-2032)

However, what most people fail to recognise is that these are no longer just fancy technology demonstrations. These tools are being employed currently by real businesses, creators, and entrepreneurs to create content that would have cost them thousands of dollars and weeks of production time only two years ago.

So, What the Hell is Generative Video AI?

Imagine a film crew, director, and the entire post-production team all built into one software app – that’s generative video AI. You give it a text prompt, such as “a cat in sunglasses on a skateboard riding through Times Square,” and it generates a video clip that appears to have been filmed with a professional camera.

The technology operates by recognising patterns of words, visual concepts, and motion. These AI models were trained on millions of hours of video materials and understand how water moves, how a human walks, how light bounces on surfaces, and how emotions change across a face.

Sora: The Hollywood Dream Machine

Sora, created by OpenAI (the same company as ChatGPT), is the future of text-to-video generation. Slated for public release in December 2024, it is capable of making 1080p videos up to 60 seconds long with synchronised audio and dialogue.

What Makes Sora Special?

Sora, created by OpenAI, is not merely creating videos; it is creating tiny movies. The model has achieved something that appeared impossible only a few months ago: relating physics to narrative consistency over longer sequences.

Key Sora Features:

Elongated Period: 60 seconds of coherent and uninterrupted video.

Audio Integration: Creates sequential sound effects, background music, and even multi-speaker dialogue.

Physics Simulation: Accurately simulates the correct physics of the physical world, including bouncing balls and flowing water.

Cameo Feature: This feature allows you to place yourself or others into AI-generated scenes using photos.

Multiple Resolutions: From 480p to 1080p at different aspect ratios.

Applications of Sora in the Real World

Content creators have already discovered creative ways to exploit the possibilities of Sora. It is used by marketing teams to demonstrate products, by educators to create videos explaining complicated ideas, and by social media creators to make interesting short videos without any filming devices.

The consistency of character is another amazing achievement. By creating a video of an individual in the first clip, Sora can keep that story alive and introduce the same character into subsequent generations – virtually impossible with previous AI video creation tools.

The Catch with Sora

Despite its great functions, Sora has major limitations. It is currently invite-only, with a small number of ChatGPT Plus (

20/month)andPro(20/month) and Pro (200/month) subscribers in the US and Canada have access to it. It has a free tier with generous limits that are not very specified and can be limited at times of high demand.

Furthermore, Sora continues to experience difficulties with complicated physics over more extended periods and sometimes produces impractical movement shapes. Video content is also watermarked and includes C2PA metadata that marks it as AI-generated content.

RunwayML: Swiss Army Knife of the Creator

The scrappy startup RunwayML, which has worked on the generative video game since 2018, has developed its Gen-4 model into a full creative suite. RunwayML may produce shorter videos (as short as 16 seconds), but it compensates by being surgically precise, having advanced editing features, and the ability to scale to 4K resolution.

The RunwayML Advantage

As Sora impresses with its film-making goals, RunwayML has established its brand name based on its practicality and creator-friendly features. The site provides a whole suite of AI applications beyond video generation.

The Standout Features of RunwayML are:

Motion Brush: A paint tool that allows one to rapidly generate motion on a desired section of a photograph.

Camera Controls: Replicate various camera movements and angles in created videos.

Various Models: Gen-3 Alpha, Gen-3 Alpha Turbo, and the new Gen-4.

4K Upscaling: Process any resolution generation up to 4K for cinema-quality output.

Professional Integration: Production workflow API access and tools.

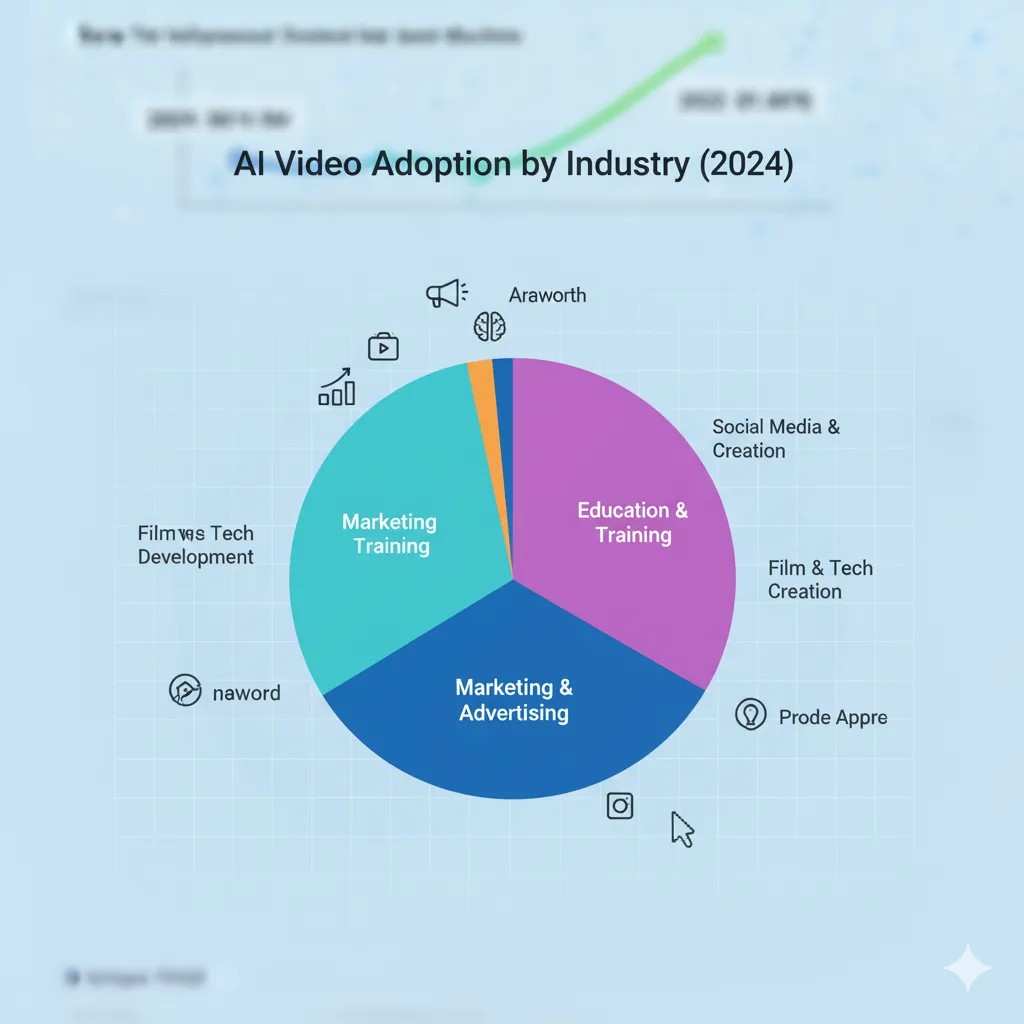

AI Video Adoption by Industry (2024)

The Credit System Explained

RunwayML uses a credit-based system, in which various video generations spend different quantities of credit. The cost of a 10-second Gen-4 video at 1080p is about 160-200 credits, and a 4K video can use 250-300 credits. This system offers flexibility, but heavy users need to manage their credit usage carefully.

RunwayML Pricing Breakdown:

Free Plan: 125 one-time credits (about 25 seconds of Gen-4 content)

Basic Plan: $12/month and 625 monthly credits.

Pro Plan: $28/month and 2,250 monthly credits.

Unlimited Plan: $76/month and unlimited generations.

Who Should Choose RunwayML?

RunwayML has been used by designers who require a control loop over their video creation process. The Motion Brush functionality of the platform alone makes it extremely powerful for advertising agencies, social media managers, and content creators who require particular visual components to act in a specific manner.

RunwayML is most suitable for creating prototypes and high-volume content because of its shorter generation times (Gen-4 Turbo can generate 10-second films in approximately 30 seconds).

Head-on: Sora versus Runway ML Performance

Quality and Realism

The two sites perform excellently in various aspects of video quality. Sora continuously delivers more cinematic, narrative-based content with better physics simulation and longer, cohesive scenes. The videos tend to appear like professional recordings, particularly in naturalistic shots.

Although having shorter clips, RunwayML Gen-4 offers a greater level of control over the creative process. Its zero-shot style adaptation enables immediate adoption of particular visual styles from reference images, which makes it remarkably effective in preserving brand consistency.

Speed and Workflow

RunwayML assumes the leadership in generation speed and workflow efficiency. The processing speed of Gen-4 Turbo is also suitable for projects with deadlines, and the editing suite enables one to refine the work on the same platform.

Although Sora is impressive in the quality of its output, it is more time-consuming in terms of processing and still does not have the advanced editing options that RunwayML offers.

Accessibility and Cost

This is where the platforms diverge. RunwayML has open and tiered pricing and is free to use when you sign up. Users can begin with the free version and upgrade to a higher level depending on their requirements.

Sora’s invite-only system creates an accessibility barrier, but the final free tier (with restrictions) may democratize high-quality video generation. Nevertheless, the unspecified scope of use and access control makes professional planning difficult.

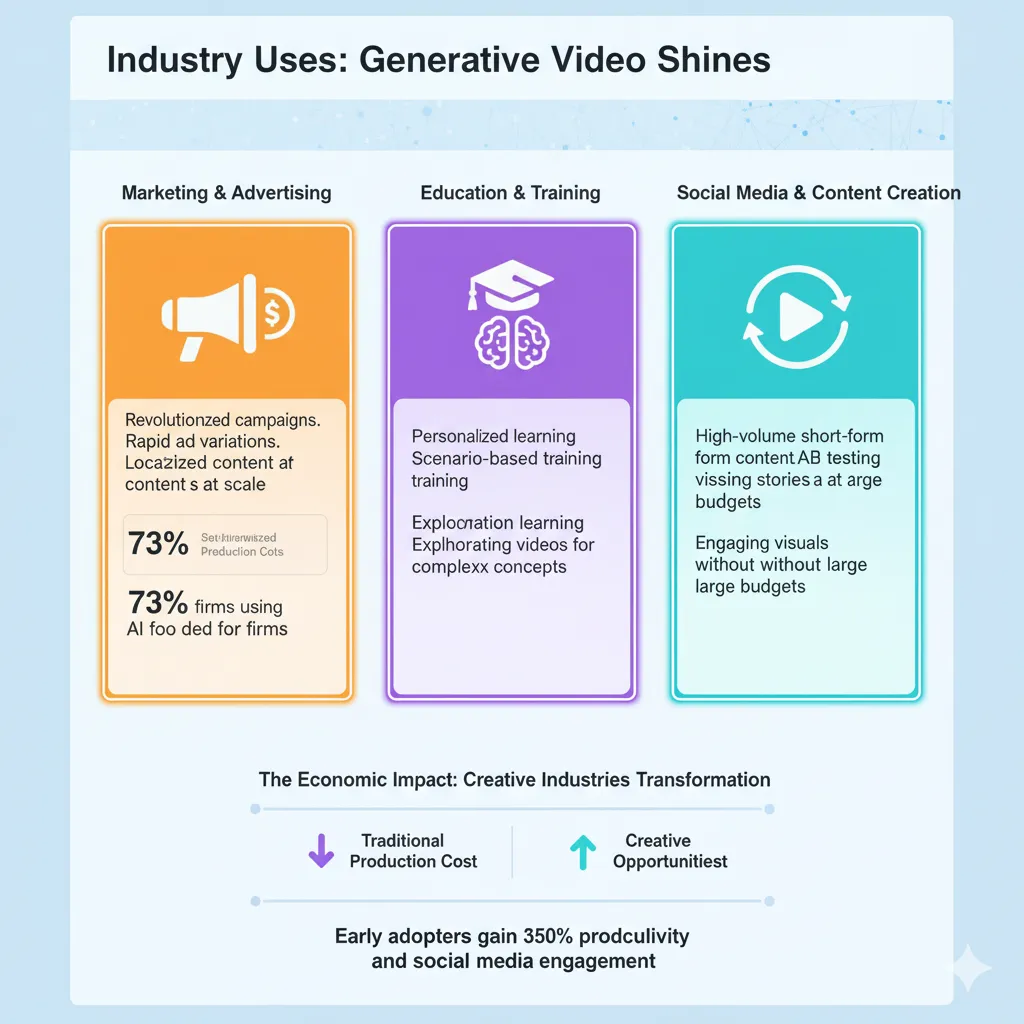

Industry Uses: Generative Video Shines

The Revolution in Marketing and Advertising

The use of AI video is being spearheaded by marketing teams, where 73% of firms in the industry have already tested video generation tools [industry statistics]. The capabilities to develop numerous ad variations, experiment with various visual strategies, and generate localized content on a global scale have revolutionized campaign development.

As a real-world example, the global marketing giant WPP offered to generate a complete Super Bowl ad in-house with the help of generative AI, cutting production time and costs significantly, and allowing the company to adjust campaigns in real time.

Education and Training

Organizations and educational institutions are using AI video to offer customized learning programs. It is a technology that allows one to create scenario-based training videos, language learning content, and visual explanations of complex concepts without the need for traditional filming.

Social Media and Content Creation

These tools are helping individual creators and social media managers maintain regular posting schedules, develop interesting short-form content, and test visual storytelling methods once utilized only by large budgets.

Both features enable creators to A/B test content effectiveness and maximize engagement rates by creating numerous versions of a single idea.

The Technical Evolution: What’s Under the Hood

Diffusion Models and World Simulation

Sora and RunwayML both use sophisticated diffusion models, although they apply them very differently. Sora uses a world model approach, trying to make sense of physical laws and spatial relations and simulate them. This allows for much more realistic physics simulation, but it consumes colossal computing resources.

RunwayML’s approach prioritises practical utility, with goals to generate faster and provide more creator control. The Gen-4 model focuses on consistency and controllability, especially when conditioned to input images.

Training Data and Capabilities

The quality of AI-generated video directly depends on the quality and diversity of training data. Training has been performed on large volumes of video content on both platforms, which have different data curation strategies and model architecture that define their strengths.

Sora’s training emphasizes the comprehension of temporal relationships and narrative continuity, while RunwayML’s training concentrates on providing creators with the necessary control over visual elements and movement patterns.

Limitations and Challenges

Existing Technical Limitations

The two platforms have weaknesses in specific situations:

Sora’s challenges:

Interaction of complex objects in the long term.

Seldom physics-breaking in longer sequences.

Poor availability, vague restrictions of use.

RunwayML’s limitations:

Reduced maximum video lengths (16 seconds for Gen-4)

The credit system can be costly when used extensively.

No inbuilt audio generation capabilities [comparison data].

Ethical Considerations

The emergence of AI video generation prompts critical consideration of content authenticity, deepfakes, and copyright interests. Both websites have taken security measures:

Watermarking of created work.

C2PA content verification metadata.

Limitations on the creation of content of identifiable persons without permission.

Automated systems for detecting potentially harmful content.

Predictions: Examples of the Future

Short-term Developments (2025-2026)

It is expected that the generative video world will experience:

Better Realism: Improved physics simulation and motion quality.

Longer Lengths: Longer video lengths with coherence.

Real-Time Generation: Live video development at almost real-time.

Improved Audio Integration: More advanced audio generation and synchronization.

Long-term Vision (2027-2030)

According to industry experts, revolutionary changes are to be expected:

Hyper-personalization: AI videos based on personal viewer preferences and demographics.

Interactive Content: Videos that are interactive and react to viewer feedback in real-time.

Industry Integration: Full workflow integration between professional video production pipelines.

Democratized Filmmaking: The ability to produce the best video content by anyone with creative imagination.

Selecting the Approach for Your Job

Choose Sora if:

You require more story-based video material.

Sound synthesis plays an important role in your projects.

You prioritize filmmaking over extensive editing.

You are able to work with invite-only availability restrictions.

Choose RunwayML if:

You require exact manipulation of visual objects.

Speed of generation and rapid iteration are important.

You need 4K output potential.

You are after quick access and open pricing.

Your creative workflow requires connectivity to other creative tools.

Consider Both if:

You are a professional content creator with diverse project needs.

Your budget supports multiple platform subscriptions.

You want to compare outputs to achieve the best results.

Introduction: Implementation into Practice

For Beginners

Begin with the free level of RunwayML to learn how to generate video. Test with basic text-to-video prompts, then proceed to more complex image-to-video and motion control.

Sample beginner prompts:

A serene lake at sunset with small waves.

A coffee cup steaming on a wooden table.

Flowers waving in a light breeze.

For Advanced Users

Use the advantages of each platform. Cinematic sequences and storytelling projects should be done with Sora, and RunwayML can be used for fine motion control and quick iterations.

Use both platforms hand-in-hand: create base footage using one tool and then perfect it with the specialised capabilities of the other tool.

The Economic Impact: Creative Industries Transformation

The generative video revolution is redefining entire industries. The cost of traditional video production is plummeting, and creative opportunities are growing exponentially. It is now possible for small businesses to produce high-quality promotional material, for educators to create engaging visual content, and for creators to compete with large studios in terms of visual quality.

Nevertheless, this change also brings up concerns about the future of traditional video production jobs and the necessity of reskilling in the creative industries.

Next to the Hype: Real-World Performance

Businesses that use AI to generate videos claim that their content creation efficiency and cost-saving increase significantly. Early adopters in the marketing industry gain 350 percent productivity and 500 per cent social media engagement when using AI-generated video content.

The technology does not remove human creativity; it enhances it, allowing creators to concentrate on storytelling and idea creation while AI handles the technical work.

Conclusion: The Future is Already Here

The technology of generative video has ceased to be a subject of experimental interest and is now a production tool. Sora and RunwayML represent two different philosophies: cinematic ambition versus practicality, narrative storytelling versus exact control.

It is not a question of which is creatively superior, but which fits your creative vision, workflow requirements, and project constraints. Both platforms will continue to develop at a fast pace, introducing new functionalities, better quality, and additional capabilities regularly.

The most exciting aspect? This change is in its infancy. The generative video tools that exist today will be a mere pittance in the coming few years. It is not a question of whether you are going to use these technologies, but how fast you can adopt them into your creative process.

The video creator of tomorrow is now, and it is more affordable, more powerful, and creative than ever before.

Whether you are a marketing expert looking to amplify your content creation, an educator wanting to introduce students to visual learning, or a creator about to make impossible things come true, generative video AI can provide you with more opportunities to narrate your story than ever before.

The only limit now? Your imagination.

FAQ’s

Q1: Is there any problem with copyright when using AI-generated videos commercially?

A: Sora and RunwayML do provide commercial rights regarding content created on their platforms. Nevertheless, you need to consider possible copyright issues when your prompts mention existing copyrighted characters or brands. It is necessary to always check the specific service’s conditions and introduce your own original elements to the generated content.

Q2: How do AI video generators treat faces and people in generated content?

A: Both sites have put in place measures to prevent the creation of deepfakes of actual individuals without their permission. The Sora application restricts the uploading of people by default and features automatic detection applications, whereas the RunwayML application includes face blur and content moderation features. The generated people are usually composites and not duplicates of actual people.

Q3: How difficult is it to learn how to use AI video generation?

A: Surprisingly gentle! Both platforms are user-friendly. The minimum technical skills needed are a simple knowledge of descriptive writing – no technical expertise is required to make even the simplest text-to-video generation. Advanced functions like style customisation and motion control can be mastered in a few days, although most users can produce usable content during their first session.

Q4: Are these tools able to completely substitute traditional video production?

A: They are not entirely, but they are dramatically changing the landscape. AI-generated videos are very effective at visualising concepts, social media posts, and scenarios that would be costly or unattainable to shoot in real life. Nonetheless, complex narratives, detailed product demonstrations, content involving human actors, and so on, can often be better produced using conventional technologies, which, in most cases, are now supported by AI tools.

Q5: How does the cost compare to traditional video production?

A: The savings are dramatic. A 30-second professional video that may cost between $5,000 and $15,000 to create in the traditional sense can be made within less than 10 credits on RunwayML, or even at no charge at all on Sora (when available). Nevertheless, consider the time required for refinement, as it may take several generations to achieve the desired outcomes.