The Biggest Challenges for AI in Healthcare in 2026

AI has gone from being tested in small groups to being used all the time in hospitals, clinics, insurance companies, and digital health platforms. By early 2026, about 88% of health systems will be using AI for things like optimizing the revenue cycle, ambient clinical documentation, and radiology triage models. But only about 17% of them say they have well-developed AI programs with a clear plan and rules for how to use them. The biggest problems for AI in healthcare in 2026 are starting to show up in the space between being ready and being used.

This article systematically analyzes the challenges related to the implementation of artificial intelligence in healthcare, incorporating insights from a systematic review of barriers, recent mixed-methods studies on AI deployment, and practical case studies in radiology, sepsis prediction, and oncology. It also connects these problems to bigger trends in AI in digital health for 2026 and offers suggestions for best practices and future directions.

A Short History: From Dartmouth to Digital Hospitals

To understand the problems we have today, it helps to remember how new the field is. The 1950s were when AI became an official field of study. People often think of the 1956 Dartmouth Workshop as the beginning of AI as a field of study. In that decade, the phrase “artificial intelligence” was first used, early programs like the Logic Theorist were made, and the first attempts were made to make machines think like people.

But healthcare has only recently begun to use it in a meaningful way. In the 1980s, expert systems were mostly used for diagnostics in a few fields, but they stayed in labs because there wasn’t enough data and computers weren’t powerful enough. The real turning point was in the 2010s and 2020s, when all three of these things came together: digitized health records, cloud computing, and deep learning. AI in digital health really took off. There are now computer vision tools for dermatology and radiology, predictive models for getting worse and going back to the hospital, and recommendation engines for oncology and managing chronic diseases.

AI will play a big role in the healthcare industry by 2025–2026. AI has already changed:

Imaging, such as radiology and pathology

Running the hospital and making sure it has enough space

Safety in drugstores and with drugs

Cycle of money coming in and getting permission first

Monitoring from a distance and virtual care

Life sciences research and development and drug discovery

But this time has made it painfully clear that making models that are right is the easy part. The hard part is figuring out how to use AI in healthcare in a way that is safe, fair, and on a large scale.

What AI in Healthcare Will Be Like in 2026

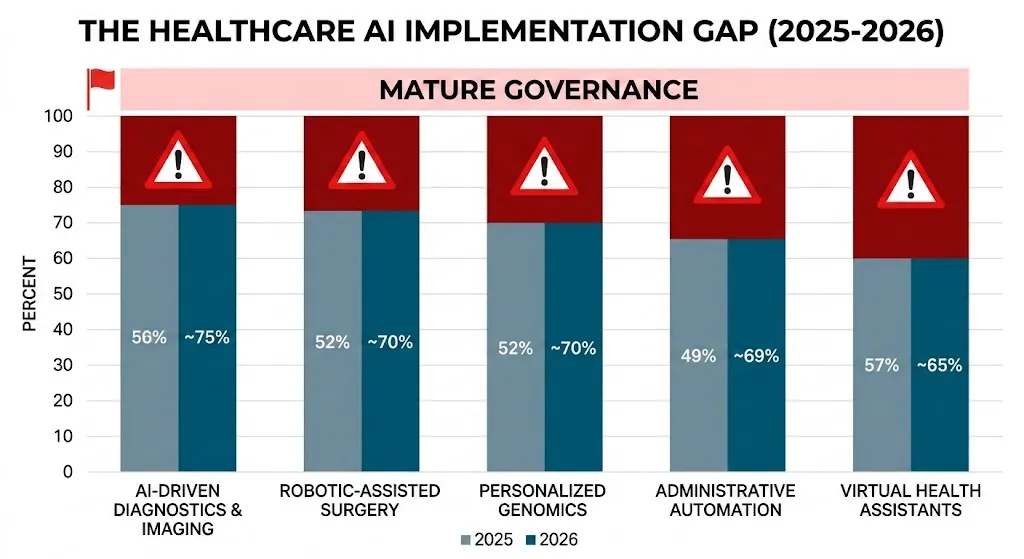

Recent surveys and scoping reviews convey a consistent narrative: adoption rates are high, outcomes are positive in some areas, but structural challenges are widespread. A report from 2025 on 233 health systems, for example, found:

Health System AI Readiness (2025–26)

| Metric | Percentage (%) |

| Organizations using AI in at least one part of business | 88% |

| Finance/Healthcare people using pilot or full AI solutions | 71% |

| Organizations reporting some AI governance structure | ~70% |

| Mature governance and a well-defined AI strategy | 17% |

| Ability to produce a full AI audit trail for regulators in 30 days | 22% |

| Enforced AI rules about model inventory and lineage | 29% |

Note: Use a hover effect on the table rows to highlight the stark contrast between adoption (88%) and mature strategy (17%).

A systematic review of the barriers to the incorporation of artificial intelligence in healthcare identifies six primary categories of challenges: ethical, technological, liability and regulatory, workforce, social, and patient safety. A more recent mixed-methods study added 12 more ideas to the AI implementation lifecycle: leadership, buy-in, change management, engagement, workflow, finance and human resources, legal, training, data, evaluation and monitoring, maintenance, and ethics.

In 2026, the most important issues for AI in healthcare won’t be whether it can work in theory, but how to use, manage, and keep it safe in messy, real-world systems.

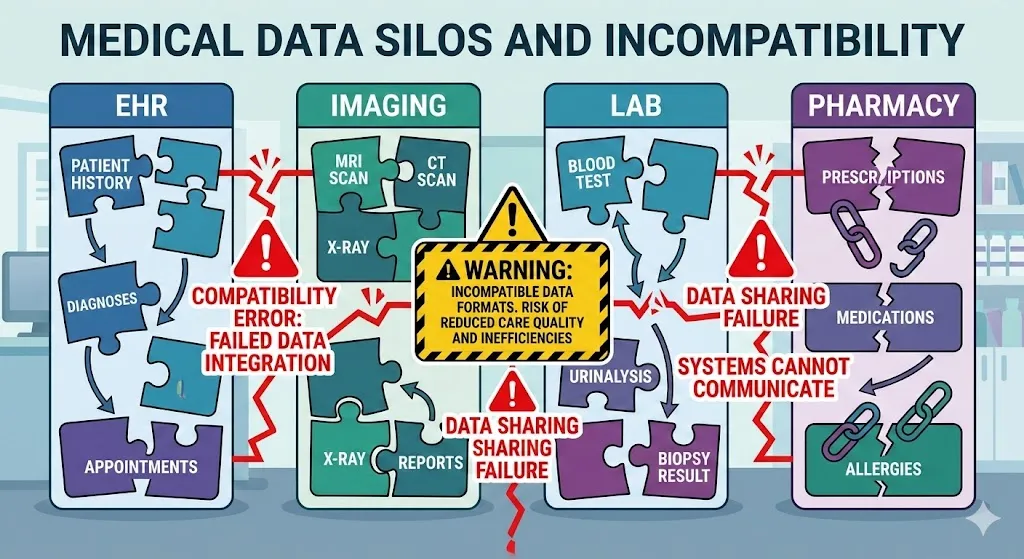

1. Concerns about data security, privacy, and rules

Sensitive information that is divided up in a high-stakes situation

There are a lot of rules about how healthcare data can be used because it is very private. The World Economic Forum says that digital and AI solutions don’t always work because data is spread out, there are strict rules, and there aren’t enough anonymized datasets available to train models. EHRs, imaging archives, lab systems, pharmacy platforms, and insurer databases all still store clinical information in different places, and the formats are often not compatible.

Systematic reviews reveal several interconnected issues:

Risk of privacy and re-identification: Even datasets that don’t have any identifying information can often be linked back to their original source, especially when they are combined with data from other sources.

Cybersecurity: Hackers don’t just go after EHRs that are already in use; they also go after AI pipelines and model-training data.

Uncertainty about regulations: Developers and hospitals have to follow rules that weren’t made with AI that learns all the time in mind. These laws cover things like HIPAA and GDPR, medical device regulation, data protection, and rules for specific industries.

Data-sharing hesitance: Companies don’t want to share detailed data for AI training because it could hurt their business, their reputation, and the law.

A recent study on the use of AI in health care found that people were hesitant to use it because they were worried about data security. Tracking, spyware, and the unauthorized secondary use of health data worried both doctors and patients.

There are blind spots in training data in the real world.

A big hospital system wants to make a model that can tell when someone with heart failure will need to go back to the hospital. Data scientists want five years’ worth of electronic health record (EHR) data. This data includes notes, lab results, imaging reports, and social factors. Privacy officers want a lot of de-identification and filtering that removes important location and timeline signals, which makes it harder to model. Legal teams want very strict rules about how institutions can share data.

The model learns from a small dataset that has been partially censored. It may look strong on the outside, but it isn’t and can’t be used in other places because it’s against the law or too hard. Researchers have found that this is a common pattern when they look at how to use AI in healthcare.

2. Issues with old infrastructure and integration

It’s not the same to have data as it is to have it ready.

In 2025, health IT teams saw time and time again that the main thing stopping AI from working well was not access to data, but whether that data was usable, standardized, and ready for large-scale computation. Clinical information is often hidden in PDFs, scanned documents, or free text that use different units, reference ranges, and languages. The information was collected for billing and record-keeping, not for using an algorithm to make decisions.

A comprehensive analysis of challenges and strategies for AI integration in healthcare highlights:

Different vendors and sites have different ways of organizing their data.

Bad interoperability and not always following standards

No quality metrics, terms, or metadata

Bad data management and tracking of lineage

Integrating technology into workflows

Radiology gives us a full case study. An NIH study on using AI in radiology workflows says that using a lot of AI algorithms quickly becomes impossible if each one needs a different way to connect to PACS, RIS, and reporting tools. Technical teams have to keep dozens of fragile integrations running, radiologists have to deal with different interfaces and result formats, and IT is too busy.

The paper argues that standards-based interoperability, which includes consistent integration patterns, uniform formats, and standardized result objects, is necessary for AI in imaging to grow. If you don’t do this, every new model makes things weaker and harder to understand.

Problems that happen a lot with integration

| Integration Challenge | How it changes how AI is used in healthcare |

| Old systems | It takes a lot of engineering work to set up heterogeneous EHR/PACS/LIS systems, and the models aren’t very easy to move around. |

| Custom interfaces | When you don’t use interoperable standards, you have to do the same work over and over, vendors are stuck, and it’s hard to grow beyond one site. |

| Batch processing | Access to real-time data is limited; models use old data, which isn’t very helpful for acute care. |

| Fragile APIs | AI has to work with both new and old systems, which makes it more likely that things will go wrong. |

Because of these old infrastructure problems, plumbing often gets in the way of AI opportunities in healthcare, like better triage, automation, and prediction.

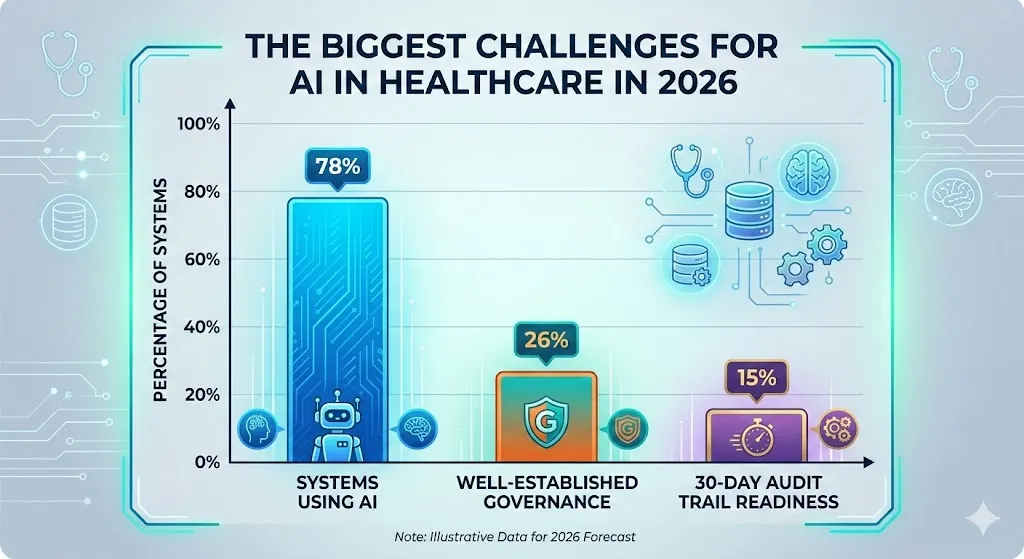

3. Shadow AI and holes in governance

Adoption is going faster than rules and regulations.

One of the most obvious AI in healthcare trends for 2026 is the gap between how AI is used and how well it is governed. In 2025, a survey of 233 health systems found that 88% of them use AI internally. But more than 80% of them don’t yet have fully developed AI programs that can handle investments well. A lot of people use AI in a random way, like by using vendor tools or trying out generative AI assistants, without a clear plan.

Another study of hospital leaders showed that:

70% of AI pilots failed at least once because the endpoints were weak, the workflow was out of sync, or data was missing.

Eighty percent said it was hard to check vendors’ AI claims without formal governance.

Only 29% had completely set up and enforced rules about model inventory, lineage, and sign-offs.

Within 30 days, only 22% could create a complete AI audit trail for regulators or payers.

Experts from Wolters Kluwer and other companies say that this means healthcare leaders will have to rethink how they govern AI and set up formal, organization-wide frameworks with training and safety measures by 2026.

The 80% difference in standards within the company

A lot of people agree on the same number: about 80% of healthcare organizations don’t have strong enough rules for how they run their own businesses to help with AI development and oversight in the future. This includes:

There is no full list of AI systems that are being used.

There is no clear way to rank AI risks by how they affect patients.

Policies that aren’t strong or don’t exist for human-in-the-loop review

There isn’t much or any ongoing monitoring of outcomes and recalibration.

It’s not clear who owns what between IT, clinical departments, and compliance.

People have been saying “AI adoption is outpacing health system governance” a lot in the late 2020s.

4. Mistakes in Bias, Fairness, and Generalization

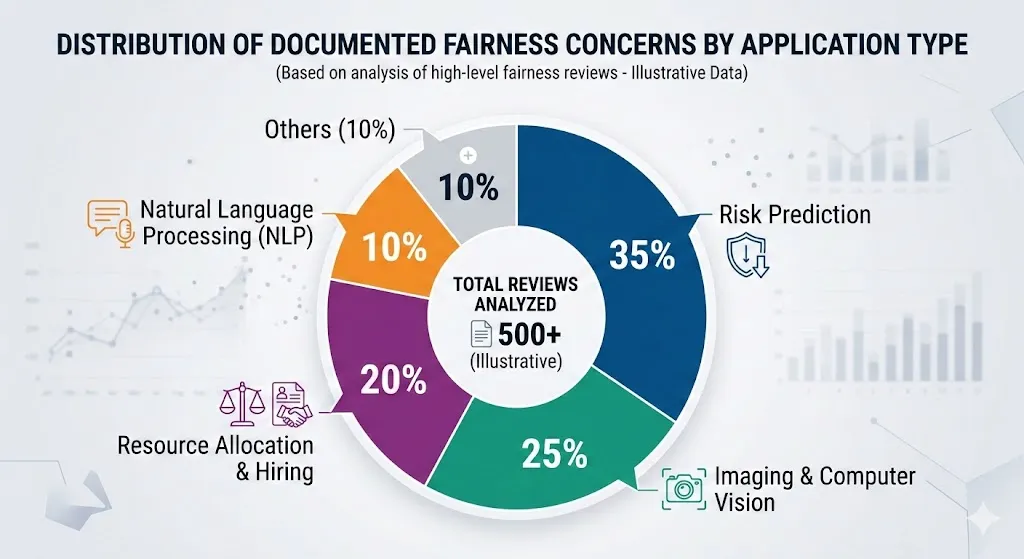

Making AI models less biased and more fair is one of the hardest AI problems to solve by 2026. Systematic reviews on fairness in AI healthcare reveal recurring patterns:

Some demographic groups, like people with dark skin in dermatology images, don’t have enough representation in the training data. This makes diagnoses less accurate.

Algorithms optimize for proxies like cost or usage, which means they don’t take into account existing inequalities, like underestimating the needs of groups that have been historically underserved.

When used with different groups of people, devices, or care settings, models that were trained on only a few institutions don’t work well.

A well-known example is an algorithm that used healthcare costs instead of health needs to figure out how to give more resources for care management in the U.S. health system. This meant that black patients got fewer resources than white patients who were just as sick. Dermatology AI has also not been as good at finding melanoma in people with darker skin because it was mostly trained on pictures of people with light skin.

A systematic review of obstacles reveals that the lack of generalization—where models trained on limited datasets underperform in unfamiliar settings—erodes clinician trust and exacerbates patient safety issues.

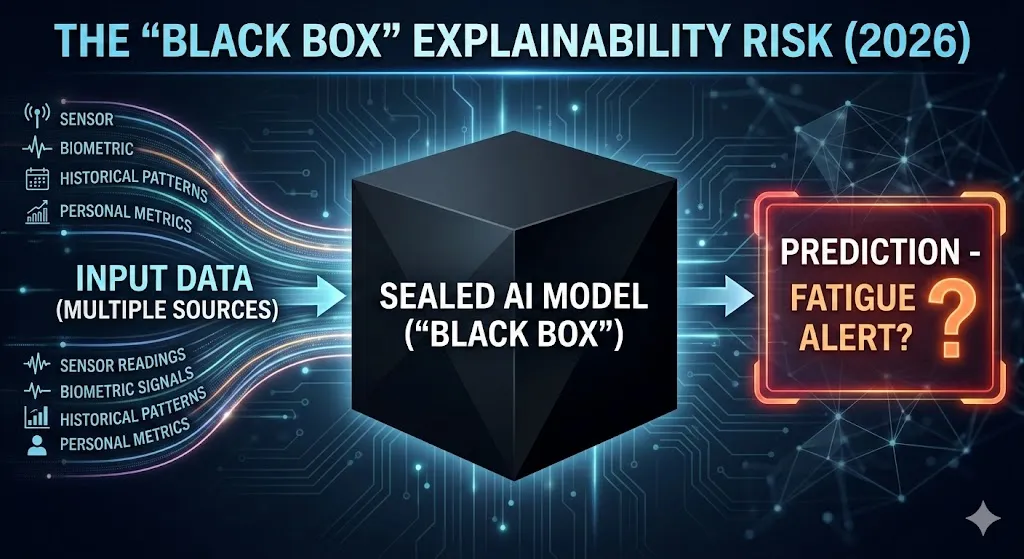

5. Risk of “Black Box,” Clarity, and Openness

It’s hard to work with artificial intelligence because the algorithms are complicated, problems don’t get solved, and it’s not clear how it works. This is especially true in fields where a lot is on the line, like healthcare. Doctors don’t want to make treatment decisions based on systems whose internal logic they can’t question or challenge.

The systematic review of barriers to the integration of AI in healthcare consistently underscores explainability and intelligibility as critical issues:

Black-box models, especially proprietary algorithms, limit how much we can see about how features affect predictions.

Even now, “explainable AI” methods often give explanations that don’t fit with how doctors think.

Regulators are beginning to want more than just numbers that show how well something is working. They also want to see proof that the data can be understood and that risks are going down.

A Case Study of the Epic Sepsis Model

The Epic Sepsis Model (ESM) is a private model for predicting sepsis that is often used as an example of a bad idea. A study involving 27,697 patients (38,455 hospitalizations) at Michigan Medicine revealed that the model:

Missed two-thirds of sepsis cases.

There were a lot of false alarms (about 18% of all hospitalizations were alerted at a common threshold).

It had an area under the ROC curve of 0.63, which is much worse than what the developer said it would be.

People got tired of getting alerts that didn’t really help them.

Because the model is private, doctors and researchers from outside the company didn’t know much about how it worked, what data it was trained on, or how it worked inside. Even though it didn’t work well in the real world, its widespread use showed that there are bigger problems with how opaque algorithms are checked, controlled, and watched at scale.

This is a good example of a bigger problem with AI in healthcare: models can be very advanced from a technical point of view but not very useful in practice, and it’s hard to find problems early when there isn’t enough information.

6. Getting the workforce ready, closing the skills gap, and taking into account human factors

Resistance and learning

Problems with people are just as important as problems with technology. Studies on the use of AI show that:

Not enough training on how AI systems work and how to understand what they show.

Concerns about losing one’s job and professional identity (“Will AI take my job?”).

Fear of having to do more work, like getting more alerts, clicking more times, and writing more documents.

Concerns regarding medical-legal liability: who bears responsibility if an AI-assisted decision causes harm to a patient?

A systematic review of barriers and facilitators reveals that inadequate knowledge of AI, issues concerning explainability, and fears of role substitution remain persistent obstacles. Clinicians often see “shadow AI” use, which is when tools are used without proper training or help.

Not enough workers and a need for more workers

The 2026 healthcare technology trends, on the other hand, show that AI is coming at a time when there aren’t enough workers and people are getting tired of their jobs. Ambient clinical documentation, AI scribes, and workflow copilots are suggested as ways to make administrative work easier and give doctors and patients more time to talk.

The issue is to make sure that AI agents help and improve the work of people instead of taking it over or replacing it. That means working with doctors to design the product, testing it over and over again, and not expecting too much from it. One expert trend analysis says that companies should “start planning now” for how AI-enabled care teams will change jobs and duties.

7. Managing Safety, Evidence, and the Lifecycle

Many AI projects fail not because the algorithm itself is wrong, but because they don’t have good plans for checking the data’s accuracy, origin, and bias, or for evaluating the results. Health systems are increasingly expected to treat AI as a full lifecycle responsibility:

Planning means figuring out if something is possible, picking a problem, and getting everyone involved.

Putting it into action means merging, training doctors, redesigning workflows, and adding human-in-the-loop safeguards.

Sustaining use—Ongoing monitoring, validation after deployment, recalibration, and decommissioning.

The PLOS ONE overview of barriers and strategies for AI implementation in healthcare puts these ideas into 12 groups, including leadership, buy-in, workflow, legal issues, training, data, evaluation and monitoring, and ethics. Many organizations lack:

Standards for success or failure that are clear in the real world.

Pipelines for keeping an eye on model drift, bias, or effects that weren’t meant to happen.

Organized ways to get rid of models that don’t work well or that you don’t need anymore.

AI in digital health could still fail without this, but it won’t be clear until something bad happens, the government looks into it, or the media covers it.

8. Models of the economy, return on investment, and incentives that don’t work together

AI has a lot of potential in healthcare from an economic point of view. It can help people avoid going to the hospital, cut down on waste, make staffing more efficient, and speed up coding and billing. However, surveys of health system CFOs show that most organizations still have trouble finding AI opportunities that will definitely pay off. Vendors often sell vague “AI value” instead of specific, measurable results.

Some of the most important economic issues are:

Costs of licenses, infrastructure, staff for maintenance, and money up front.

It’s hard to tell which AI tools are making things better and which ones are making things worse.

People who put money into something (providers) and people who get something out of it (insurers, patients, and society) don’t always match up.

There is no consistency in how much money is given back for digital health and decision-support tools.

This is one reason why a lot of AI pilots are still in the “proof-of-concept” stage. Tools that look promising in the clinic can still fail to be used if there aren’t clear economic models and plans for sharing savings.

9. Trust in others, acceptance in society, and morals

Patients, the general public, and individual physicians must all have confidence in AI within the healthcare sector. Reviews of barriers and facilitators show how trust is changed by:

Major failures or safety issues.

There are stories in the news about AI taking over doctors’ jobs or making care less available.

Concerns that algorithms will write in bias or put profits ahead of people.

Permission for AI training to use data again is not clear.

Concerns about autonomy, consent, and justice are not just ideas. Triage bias, unclear risk scoring for insurance coverage, or AI-driven denial of prior authorization can all make it harder to get care. A scoping review of AI fairness in clinical settings asserts that health equity may be jeopardized if fairness is not explicitly addressed throughout the AI lifecycle.

In 2026, ethical governance must evolve from ad hoc committees and isolated evaluations to integrated, operationalized practices, encompassing regular bias audits, patient representation in governance, transparent public communication, and the integration of ethics at every stage of AI deployment.

Best Practices: How the Best Businesses Are Handling It

Implementation studies and case reports on governance show that there are some best ways to use AI in healthcare:

Put in place AI rules in all departments: Make a committee to oversee AI that includes people who work in medicine, data science, IT, law, compliance, and ethics.

Tier and list AI systems: Create a master list of all the AI tools in use, even “shadow AI.” Keep track of the purpose, data sources, level of risk, and whether the vendor is in-house or not.

Human-in-the-loop: Any system that affects diagnosis, treatment, or benefit decisions must be overseen by a clinician.

Standards-based integration: Use data and imaging standards (HL7, FHIR, DICOM) that work with each other to avoid fragile point integrations.

Invest in data readiness: Before modeling, clean, standardize, and write down datasets. Keep track of data lineage and versions.

Local validation: Make sure that vendor models work on their own with local patient populations before using them.

Fairness and openness: For each use case, make sure you have clear goals and measures of fairness.

AI Issues in the Future: 2026 to 2030

There are a number of trends in AI in healthcare for 2026 that point to the next set of problems and opportunities:

Artificial intelligence that can work on its own: AI agents that can plan, order tests, and summarize charts will make it harder to tell the difference between a tool and a coworker.

Virtual hospitals and 24/7 monitoring: AI will use data from wearables and home devices more as the Internet of Medical Things (IoMT) grows.

Changes in the rules: Expect stricter rules about fairness, explainability, and lifecycle reporting as regulators test frameworks that can watch real-world performance.

Combining AI for clinical and operational purposes: Systems that improve patient flow, staffing, and the revenue cycle simultaneously will raise new ethical questions.

If done right, AI in healthcare could change the system from reactive, episodic care to proactive, continuous, and personalized care over the next ten years. If done wrong, it could make things worse, break trust, and make it hard to make decisions that are clear and accountable.

Questions and Answers: The Hardest Things AI Will Have to Deal With in Healthcare in 2026

1. When did AI become a field of study, and why is that important for healthcare now?

The 1956 Dartmouth Workshop was the first time AI was recognized as a formal academic field. It started in the 1950s. Healthcare is putting a technology that is still being developed into places that are very important for safety and are heavily regulated. That’s why things like governance, fairness, and managing the lifecycle are so important.

2. What are the main problems that make it hard to use AI in health care?

A well-known systematic review lists six main types of barriers: ethical, technological, liability and regulatory, workforce, social, and patient safety. A closer look adds four more ideas, for a total of 12. These include leadership, workflow, data, training, evaluation, maintenance, and ethics during the planning, implementation, and sustainment phases.

3. Why don’t a lot of AI pilots in healthcare work?

Up to 70% of AI pilots fail not because the algorithms are wrong, but because the workflows aren’t set up right, the endpoints aren’t clear, the data isn’t ready, and the governance isn’t strong enough. It is also hard to move from pilot to system-wide deployment because the infrastructure is broken up, there aren’t any standards-based integration, and there aren’t any good ROI models.

4. How serious is the issue of bias and fairness in AI healthcare models?

Fairness reviews and scoping studies show that bias is common because of unrepresentative training data, proxy variables that show inequality, and problems with implementation that are specific to the situation. These biases can lead to wrong diagnoses, unequal access to resources, and a loss of trust. That’s why fairness metrics, a variety of datasets, and regular bias audits are now necessary for responsible AI in healthcare.

5. What should healthcare leaders do first to deal with AI risks in 2026?

There are three main things that need to be done: set up a cross-functional AI governance committee with clear authority; make a list of all operational AI systems and categorize their risks; and make sure that any AI that affects decisions about diagnosis, treatment, or coverage has to have a human in the loop and be monitored. Putting money into data readiness, staff training, and evaluations that focus on fairness is one way to make governance a part of everyday life.