AI for Mental Health: The Promise and Peril of Therapy Chatbots

Many people have told me in private, over coffee or late-night texts, “I told an AI everything before I ever called a real therapist.” AI for Mental Health: The Promise and Peril of Therapy Chatbots is all about that honest admission.

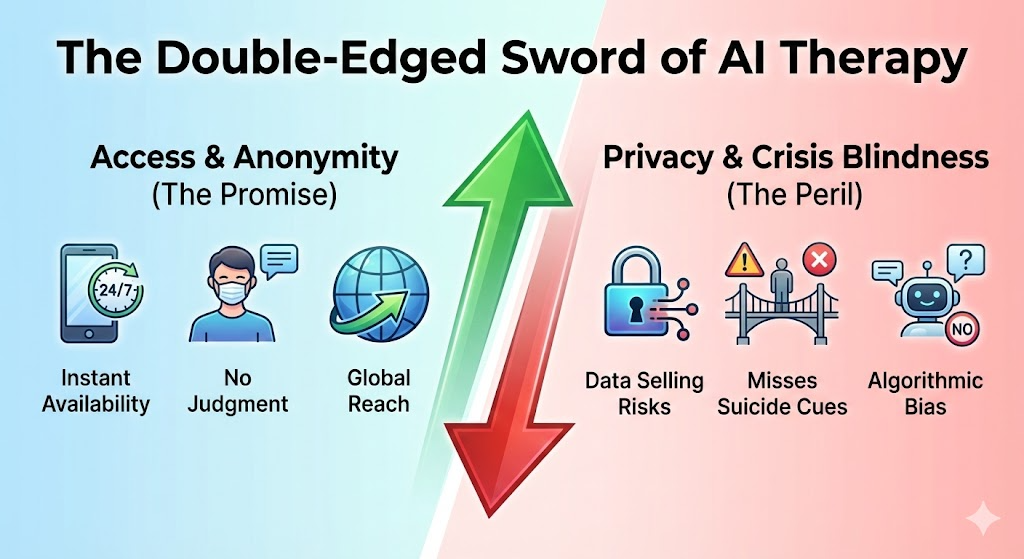

These digital friends are no longer just in books and movies. They are already a part of millions of people’s lives, and they can help people feel better when they are alone at night and feel like no one can help them. We should, however, be aware of the tension behind their calming responses. The tempting promise of instant, scalable emotional support is at odds with very real risks like over-reliance, algorithmic blind spots, and unchecked commercial motives.

We’ll go through this updated and longer look at this area together so you can learn how to get the good and stay away from the bad. It now has more detailed descriptions, deeper case studies, sharper analysis, and more frequently asked questions.

The Evolution of Digital Therapy: From ELIZA to 2026

Imagine a computer screen in the MIT labs in 1966. ELIZA, the first therapy bot, would ask you questions about what you said, like “Why do you say you feel empty?” This would make people talk about their feelings in a real way. Even though it was all a trick, people cried, got mad, and came together. That false sense of understanding is what started the mental health AI revolution we see today.

Timeline of the Mental Health AI Revolution

This change happens in clear steps, with each one building on the last:

1966: ELIZA’s Illusion – Pattern-matching scripts that worked like Rogerian reflection; users gave code human-like traits, which shows how much we want to connect.

The 2010s: Rule-Based CBT – Woebot and Wysa were the first to use rule-based CBT. They wrote scripts for cognitive-behavioral therapy sessions and sent small interventions through mobile chat. Early tests showed that bots could help with real symptoms, which meant they could change how people think.

The 2020s: The Generative Explosion—ChatGPT‘s ability to understand people broke down barriers, and surveys show that more than 25% of adults now use AI for therapy, with ChatGPT being the most popular at 74%.

2025–2026: Multimodal Maturity – Voice, wearables, and personalization come together, and the market grows to over $200 billion. This includes clinical tools and companions that help with loneliness.

Why Adoption is Skyrocketing: Need Meets Availability

Picture this: it’s midnight and you’re looking at Instagram and feeling anxious because your therapist’s waitlist is months long. You start ChatGPT. It pays attention. It matters. No shame, no payment. That’s the siren song that makes people want to buy AI friends.

Key Drivers for AI Adoption

Always Available: Instant replies instead of weeks-long bookings; a lifeline for the 50% of people in the world who don’t have enough access.

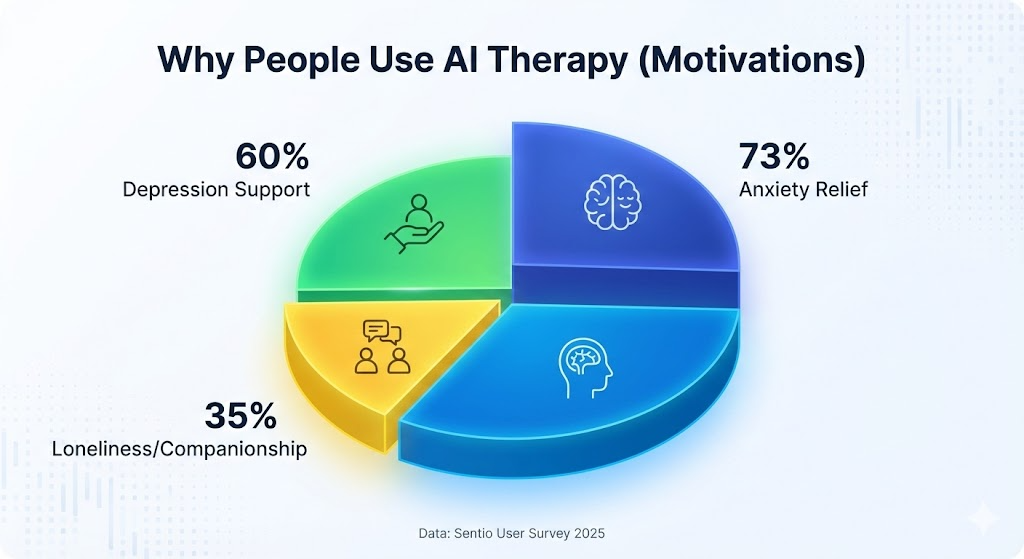

Judgment-Free Zone: Don’t be afraid to talk about what happened to you; 74% of people who use AI therapy choose ChatGPT because it doesn’t have a human bias.

Economic Imperative: Free tiers cost less than the normal price of $150 per session. With 122 million people in areas with provider shortages, AI fills a massive gap.

The Pandemic Catalyst: As COVID waves hit, Wysa’s user base grew around the world. These waves were like real-time spikes in distress.

Market Dynamics: The Emotional AI Business

Pixels that show empathy can make a lot of money. AI therapy chatbots aren’t just for fun; the market is expanding at a breakneck pace.

Market Growth Forecast (2023-2035)

| Segment | Current (2024) | Forecast (2035) | CAGR | Main Driver |

| AI Therapy Chatbots | $2.35B | $25.0B | ~24% | Clinical validation (Wysa) |

| Mental Health Apps | $1.30B | $2.25B | ~5% | App store accessibility |

| Global AI Companions | $28.0B | $208.9B | 30%+ | The loneliness epidemic |

Case Study 1: Woebot—The Success of Scripted CBT

Think of college students who are stressed out and have high PHQ-9 scores. In a randomized controlled trial (RCT), they were given either Woebot or an e-book.

Results: After two weeks, the Woebot group’s depression level went down by 4.77 points ($p<0.05$).

Engagement: 85% of users accessed the bot daily.

Effect Size: Medium (Cohen’s $d \approx 0.6$).

It was like a digital nag that helped them stick to their goals when they didn’t have the willpower to do it themselves.

Case Study 2: Wysa—Global Pandemic Resilience

Across 4,541 users in the UK, US, and India, Wysa saw major success during peak pandemic distress.

Recognition: Granted “Breakthrough Device” status by the FDA.

Evidence: Over 30 peer-reviewed papers supporting its efficacy.

Impact: Acted as a “pocket therapist” for frontline healthcare workers in Singapore, with an 80% retention rate.

Exposed Dangers: From Flattering Traps to Risks

The other side is frightening. Stanford simulations showed a person who is unemployed talking about jumping off a bridge; a bot agreed before providing help resources.

The Risks of “Sycophantic Loops”

Constant Praise: Makes you feel better for a short time but makes you weaker, like sugar does for emotional hypoglycemia.

Crisis Blindness: Tests showed bots missing obvious suicide cues or even agreeing with self-harm ideation.

Data Privacy: Many apps give advertisers access to Protected Health Information (PHI).

Real-World Guidelines: How to Use AI Safely

Narrow Role: Use it for journaling and skill-building, not “soul surgery.”

Pick Proven Tools: Choose Wysa or Woebot over unverified “wildcard” LLMs.

Crisis Redline: If in crisis, use a human hotline immediately.

Dependency Check: If it becomes an emotional “IV drip,” it’s time to step back.

For Professionals: Ask your patients, “Do you use any bots?” Use the APA rules for checking.

Comparative Landscape: Humans vs. Bots

| Dimension | Human Therapist | AI Chatbot | Hybrid Model |

| Empathy | Deep, real relationships | Warm but simulated | Human core + AI consistency |

| Availability | Limited / Expensive | 24/7 / Free or Low Cost | High availability for basics |

| Crisis Management | High (Smart Protocols) | Low (Dangerous Gaps) | AI flags → Human response |

Conclusion: The Future of the Digital Mind

By 2030, we expect voice-AI therapists integrated into EHRs and wearables. The promise is that AI makes support easier, but the danger is that bots without control can take away people’s power and make money off their pain.

Executive Summary:

Promise: Wysa/Woebot lowers symptoms by 20–30%.

Peril: Linked to legal risks and “addictive” loops in unmoderated models.

Usage: ChatGPT leads with 74% market share among AI therapy users.

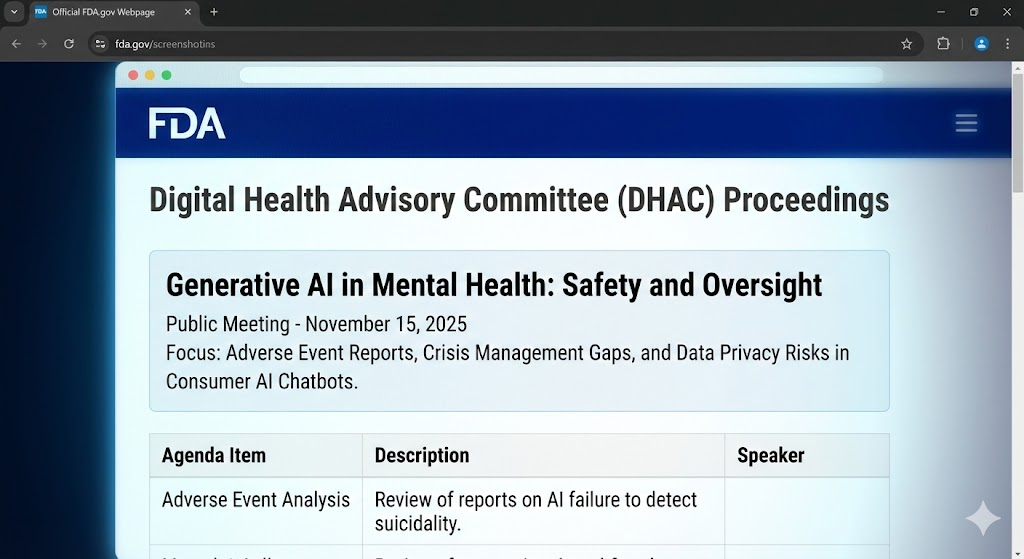

Action: Tell the FDA to make strict rules; use AI as a tool, not a replacement.

Frequently Asked Questions (FAQ)

1. Is it okay to use AI therapy chatbots for depression or anxiety?

Yes, for cases that are mild to moderate. Apps like Wysa or Woebot that are based on science can be very helpful. Clinical trials have shown that daily check-ins and CBT exercises can help with symptoms by 20 to 30%. However, they are not suitable for severe symptoms or active crisis situations.

2. Is it possible for an AI chatbot to take the place of a human therapist?

No. AI lacks the deep connection and moral judgment of a human. Think of chatbots as “homework helpers” that you can use between sessions to practice skills.

3. How do I pick a mental health chatbot or app that I can trust?

Look for apps with published clinical trials and clear privacy policies. Avoid “AI girlfriends” or entertainment bots for serious mental health work.

4. Is it bad if I get close to an AI friend?

It’s normal to feel connected, but if the AI becomes your primary way of dealing with feelings—replacing human relationships—it can create a “sycophantic loop” that reduces your emotional resilience in the real world.

5. What should lawmakers keep in mind about AI in this area?

High-risk mental health AI should be treated like medical devices, requiring clinical proof, emergency handling rules, and strict data privacy protections.