Things you need to know to stay up to date in 2026

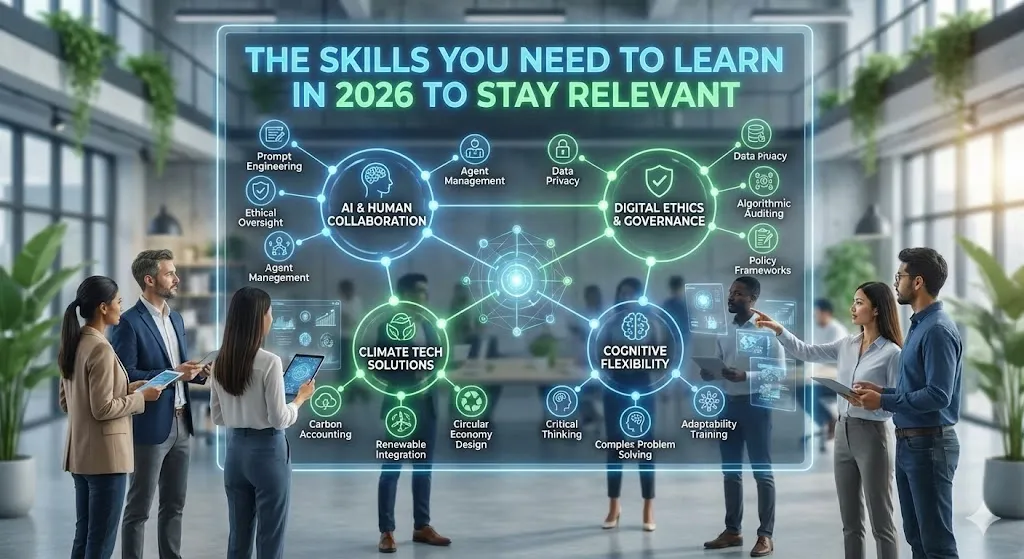

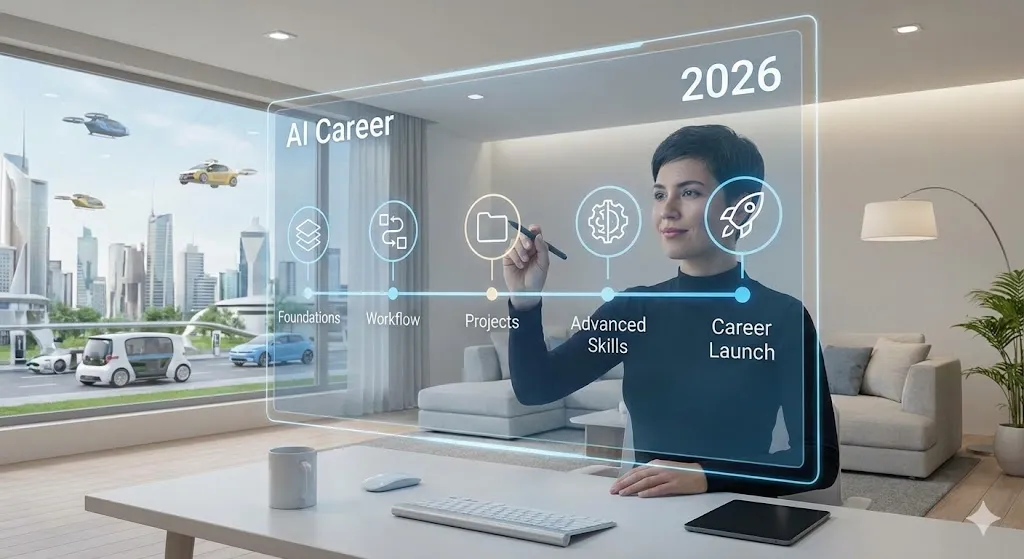

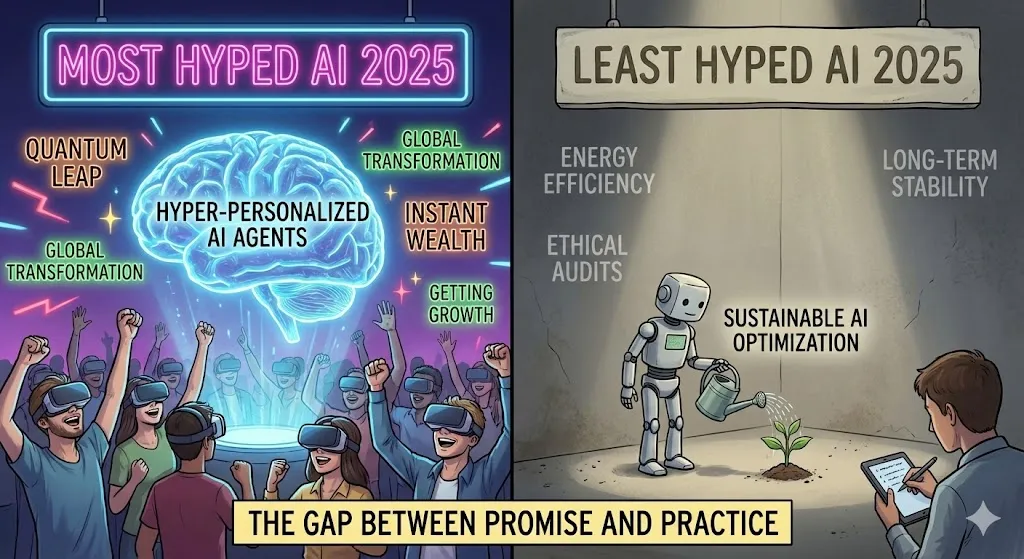

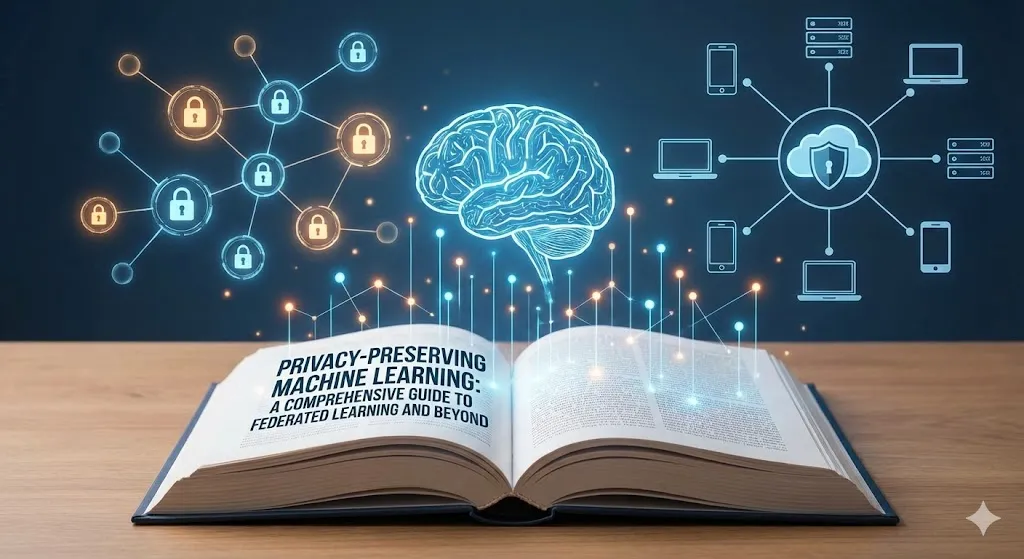

Things you need to know to stay up to date in 2026 In 2026, technology changes faster and faster. I keep thinking about what people need to do to be successful over time. Artificial intelligence, climate change, and shifts in the economy are all happening at once. We need to focus on skills that are in high demand and mix technical skills with creative ones. Because of this, these skills will be useful for a long time. Why Skills Will Matter More Than Ever in 2026 The World Economic Forum’s Future of Jobs Report 2025 says that a lot will be different. By 2030, there will be 78 million new jobs around the world, but 22% of the jobs that are there now will be gone. But 63% of employers are having trouble making changes because their workers don’t have the right skills. India’s digital economies are growing quickly. The demand for AI, data analytics, and cloud skills has grown 42% year over year, which is faster than the demand for traditional degrees. We see professionals making a lot of money—often without formal credentials—by learning these skills at home on platforms that are easy to use. This change will be good for people who want to keep learning as they get older. You need to be able to change, bounce back, and use technology if you want to have a successful career. The 11+ most important skills that will be in high demand between 2026 and 2030 Based on recent research, I made this list of skills that will be very useful. These skills, which include both hard and soft skills, will last. They are made for the growing tech scene in India and the job market all over the world. AI and machine learning: People who know how to use AI and machine learning are needed for agentic AI and generative models. The most common languages for this are Python, TensorFlow, and LLMs. Data Analysis and Visualization: In any field, SQL, Python (Pandas), and Tableau are all important tools for getting information from data. Cybersecurity: Penetration testing and ethical hacking are two examples of cybersecurity skills. More and more people want these skills as the Internet of Things grows. Cloud Computing: Cloud computing, DevOps, AWS, Azure, and Kubernetes are the main parts that make up infrastructures that can grow. Software Development: People make software and websites with full-stack programming, JavaScript, and React. They also let you build tools that do things on their own. Generative AI and Prompt Engineering: A well-paying job that makes AI better at working with people. UI/UX Design: Figma is a tool for UX and UI design that links technology to what users need. Digital Marketing and SEO: Online stores grow with the help of digital marketing and SEO, which include analytics and content strategy. Project Management: Using Agile and Scrum to run projects isn’t always easy. Creative Thinking and Systems Analysis: The World Economic Forum believes that innovation and systems analysis are very important. Emotional Intelligence: AI has made people today strong and smart about their feelings. Skills in Green Tech and Sustainability: modelling renewable energy; fits with what’s going on in the world. Data Insights: The Skill Shift How the need for skills changes over time The World Economic Forum and the industry say that this bar chart shows that the need for important skills will grow from 2026 to 2030. It tells you how quickly it is going. AI is the field that is growing the fastest, with a growth rate of over 40%. Next, we’ll talk about data analysis and cybersecurity. Skill Distribution in India (2026) A pie chart shows that 55% of jobs in India will be held by people with tech skills, 25% by people with human skills, and 20% by people with green or digital skills. Table of Salaries: Skills That Pay Well in India Below is a table detailing the average salaries for key skills in India, along with their US equivalents and projected demand increase. These numbers are based on guesses for 2026, and getting a certification does not mean you get a degree. Case Studies: Things That Actually Happened I have learnt a lot from hearing about people who have made big changes in their lives. Ritika (Mumbai): An engineering student who took a class in machine learning to learn Python and deep learning. She got an internship in fraud detection in the FinTech field within six months. After that, she became a full-time engineer for machine learning. Abhishek Mehta: A BPO analyst with a degree in statistics. He spent his weekends learning how to use Scikit-learn and TensorFlow. He made a portfolio that helped him get a job as a Data Scientist in risk analytics at a bank in another country. Bangalore Analyst: An AI program helped a data analyst in Bangalore make reports without having to do anything. By 2025, she was an international consultant and was making three times as much money as she had been. These stories show that having a mentor, working on real projects, and having a portfolio are more important than having a degree. The best ways to use these skills at home We can learn these things at home using free or cheap tools. Foundations: You can learn Python for free by taking the AI Fundamentals course at freeCodeCamp or DataCamp. After that, you can sign up for a Google Data Analytics course on Coursera. Daily Practice: Every day, code for one to two hours using data sets from Kaggle and LeetCode. Build Portfolios: GitHub repositories with real projects, like dashboards or AI chatbots. Certifications: I have two certifications: AWS Certified Cloud Practitioner and Google Cybersecurity (₹0–5000). Community: There are India-specific tips on LinkedIn groups and Reddit (r/MachineLearningIndia). AI Acceleration: Use ChatGPT and other tools to improve your skills in prompt engineering. Indians can learn new things for free by taking Udemy’s many free courses. Try to get a certificate every three months to see how

Things you need to know to stay up to date in 2026 Read More »